Researchers Create Drone AI That Can Spot People Fighting

Public violence has severe consequences for many, whether altercations be between a small number of people or in large groups.

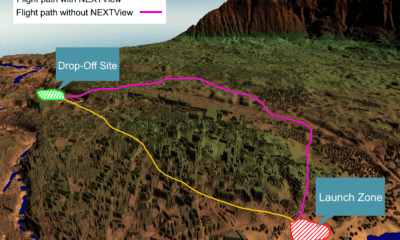

The potential of drones that can fly above crowds of people and use imaging technology to view activity below, and are able to detect certain types of behaviour could have great implications for those working in public safety.

Events such as the Boston Marathon bombing and the Manchester Arena bombing – while not in themselves caused by riots – sparked an idea in the minds of one group of researchers: how to create a drone surveillance system that can spot violence and assist in the reduction of public injury during incidents endangering public safety.

The team of researchers, hailing from the UK and India, had a couple of false starts following initial research but have now successfully demonstrated such a drone surveillance system.

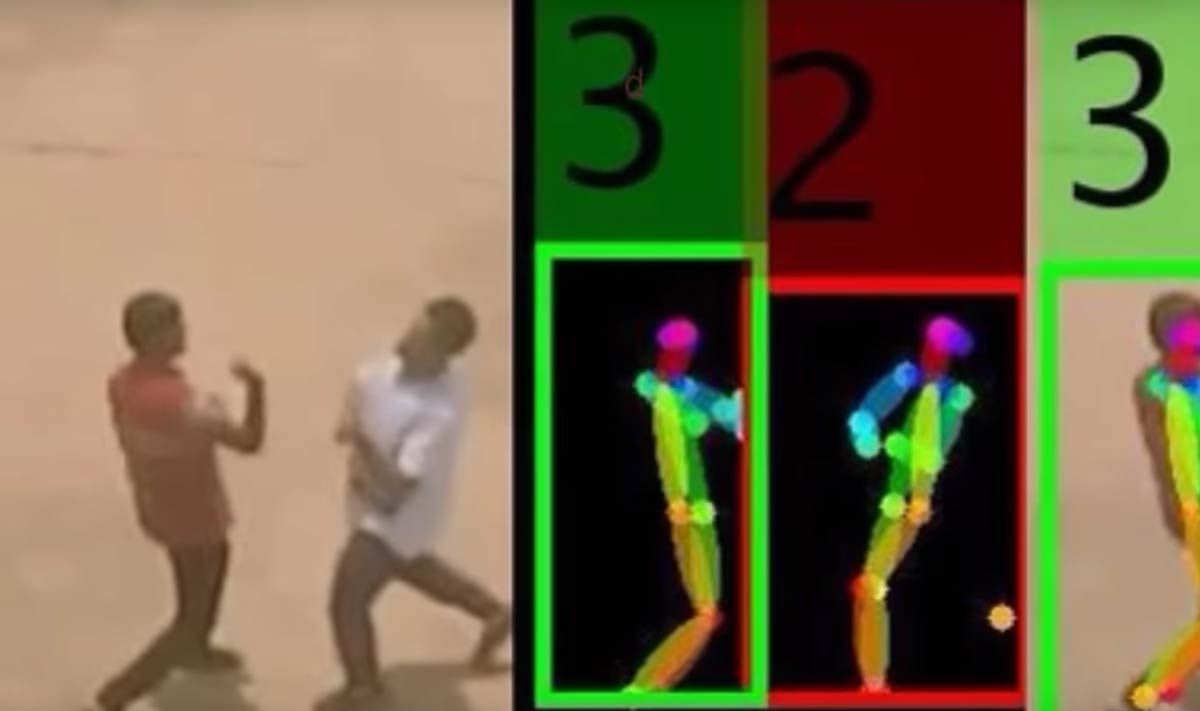

This system uses deep learning algorithms to spot small groups of people fighting in real-time, by estimating the human poses and the orientation of limbs between the violent individuals.

Called ScatterNet Hybrid Deep Learning (SHDL), the fledgling proposed network has so far involved a fair amount of work, including enlisting 25 interns to mimic fights so that a training dataset could be created.

Whilst being filmed by a Parrot AR drone from 2-8 metres above, the interns simulated 5 different categories of fighting – punching, kicking, stabbing, strangling and shooting.

However to be able to tell what poses equated to violence, first the neural network needed to know what points on each person’s body needed to be detected.

This would have meant a time-consuming job for the research team, marking 18 coordinates on the bodies of the interns on each of the 10,000-20,000 images needed to effectively train the deep learning algorithms.

Amarjot Singh, a Ph.D. student from University of Cambridge who is working on the project, came up with a workaround solution that simplifed this process.

By combining a feature pyramid network, a common component of object recognition systems that can first recognise individual humans, with a support vector machine, which then analyses the poses and assigns a category of violent or nonviolent.

Singh said this time around the results of the AI training were promising.

“This time we were able to do a relatively better job, because the software was able to run in realtime and does a relatively good job of detecting violent individuals,” he told Spectrum.

The potential of this technology is still yet to be fully realised – Singh admits that the accuracy of the system drops considerably as more people are added to the mix.

This means that as yet, we will not see AI drones policing massive events like concerts and sporting matches.

The researchers do however intend to continue their research, and as such have applied for permission from Indian authorities to test out the drone surveillance system at some music festivals.

If the technology does reach market in a form that can be utilised by public safety agencies, Singh assures that there would always be a human at the helm to monitor any nefarious activity detected.

“The system is not going to go on its own and fly and kill people,” Singh said.