AI Has Already Been Weaponised – and it Shows Why We Should Ban ‘Killer Robots’

A dividing line is emerging in the debate over so-called killer robots. Many countries want to see new international law on autonomous weapon systems that can target and kill people without human intervention. But those countries already developing such weapons are instead trying to highlight their supposed benefits.

I witnessed this growing gulf at a recent UN meeting of more than 70 countries in Geneva, where those in favour of autonomous weapons, including the US, Australia and South Korea, were more vocal than ever. At the meeting, the US claimed that such weapons could actually make it easier to follow international humanitarian law by making military action more precise.

Yet it’s highly speculative to say that “killer robots” will ever be able to follow humanitarian law at all. And while politicians continue to argue about this, the spread of autonomy and artificial intelligence in existing military technology is already effectively setting undesirable standards for its role in the use of force.

A series of open letters by prominent researchers speaking out against weaponising artificial intelligence have helped bring the debate about autonomous military systems to public attention. The problem is that the debate is framed as if this technology is something from the future. In fact, the questions it raises are effectively already being addressed by existing systems.

Most air defence systems already have significant autonomy in the targeting process, and military aircraft have highly automated features. This means “robots” are already involved in identifying and engaging targets.

Humans still press the trigger, but for how long? Burlingham/Shutterstock

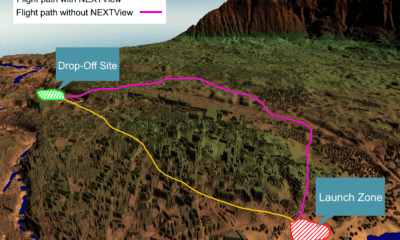

Meanwhile, another important question raised by current technology is missing from the ongoing discussion. Remotely operated drones are currently used by several countries’ militaries to drop bombs on targets. But we know from incidents in Afghanistan and elsewhere that drone images aren’t enough to clearly distinguish between civilians and combatants. We also know that current AI technology can contain significant bias that effects its decision making, often with harmful effects.

As future fully autonomous aircraft are likely to be used in similar ways to drones, they will probably follow the practices laid out by drones. Yet states using existing autonomous technologies are excluding them from the wider debate by referring to them as “semi-autonomous” or so-called “legacy systems”. Again, this makes the issue of “killer robots” seem more futuristic than it really is. This also prevents the international community from taking a closer look at whether these systems are fundamentally appropriate under humanitarian law.

Several key principles of international humanitarian law require deliberate human judgements that machines are incapable of. For example, the legal definition of who is a civilian and who is a combatant isn’t written in a way that could be programmed into AI, and machines lack the situational awareness and ability to infer things necessary to make this decision.

Invisible decision making

More profoundly, the more that targets are chosen and potentially attacked by machines, the less we know about how those decisions are made. Drones already rely heavily on intelligence data processed by “black box” algorithms that are very difficult to understand to choose their proposed targets. This makes it harder for the human operators who actually press the trigger to question target proposals.

As the UN continues to debate this issue, it’s worth noting that most countries in favour of banning autonomous weapons are developing countries, which are typically less likely to attend international disarmament talks. So the fact that they are willing to speak out strongly against autonomous weapons makes their doing so all the more significant. Their history of experiencing interventions and invasions from richer, more powerful countries (such as some of the ones in favour of autonomous weapons) also reminds us that they are most at risk from this technology.

Given what we know about existing autonomous systems, we should be very concerned that “killer robots” will make breaches of humanitarian law more, not less, likely. This threat can only be prevented by negotiating new international law curbing their use.

Ingvild Bode, Senior Lecturer in International Relations, University of Kent

This article was originally published on The Conversation. Read the original article.