AI

Drone Uses AI to Overcome Turbulence

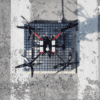

Takeoffs and landings are often the two trickiest parts of multi-rotor drone flights. Autonomous drones in particular could be typically wobbly due to turbulence and inch slowly toward a landing until power is finally cut and then dropping the remaining distance to the ground.

Now artificial intelligence experts in a joint project with control experts are developing a system that uses a deep neural network to help autonomous drones “learn” how to land more safely and quickly, using less power. The system is dubbed “Neural Lander”- a learning-based controller which modifies a drone’s landing trajectory and rotor speed by tracking its position and speed to achieve the smoothest possible landing. The new system was tested at At Caltech’s Center for Autonomous Systems and Technologies– CAST’s three-story-tall aerodrome.

The project is a collaboration between Caltech artificial intelligence (AI) experts Anima Anandkumar, Bren Professor of Computing and Mathematical Sciences, Yisong Yue, assistant professor of computing and mathematical sciences and Soon-Jo Chung, Bren Professor of Aerospace in the Division of Engineering and Applied Science (EAS) and research scientist at JPL, which Caltech manages for NASA.

Chung says, “This project has the potential to help drones fly more smoothly and safely, especially in the presence of unpredictable wind gusts, and eat up less battery power as drones can land more quickly.”

To ensure DNN guided smooth drone flight the team employed a technique known as spectral normalization, which smooths out the neural net’s outputs so that it doesn’t make wildly varying predictions as inputs or conditions shift. Improvements in landing were measured by examining deviation from an idealized trajectory in 3D space. Three types of tests were conducted: a straight vertical landing; a descending arc landing; and flight in which the drone skims across a broken surface—such as over the edge of a table—where the effect of turbulence from the ground would vary sharply.

Vertical error decreased by a 100% thanks to the new system which allowed for controlled landings and reduced lateral drift by up to 90 percent. In the experiments, the new system achieved actual landing. Further, during the skimming test, the Neural Lander produced a much a smoother transition as the drone transitioned from skimming across the table to flying in the free space beyond the edge. “This interdisciplinary effort brings experts from machine learning and control systems. We have barely started to explore the rich connections between the two areas,” Anandkumar says.

A paper titled “Neural Lander: Stable Drone Landing Control Using Learned Dynamics.”– describing the Neural Lander was presented at the Institute of Electrical and Electronics Engineers (IEEE) International Conference on Robotics and Automation on May 22. The paper’s Co-authors are Rose Yu from Northeastern University and Kamyar Azizzadenesheli from UC Irvine. This research was funded by CAST and Raytheon Company.

#ICRA2019 We will present Neural Lander today @ POD 22, 4-5pm

One of the first instances of provably robust deep learning based controllers! We show Lyapunov stability while using a deep NN as part of the controller design. Experiments on real robots too!https://t.co/mVQVGhjnnV pic.twitter.com/HJvH4mxX4w— Guanya Shi (@GuanyaShi) May 22, 2019

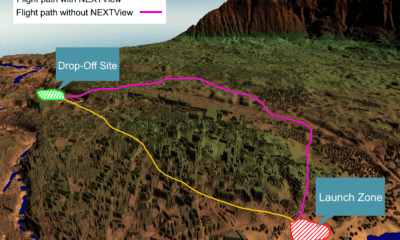

Besides its obvious commercial applications—Chung and his colleagues have filed a patent on the new system—the new system could prove crucial to projects currently under development at CAST, including an autonomous medical transport which can land in difficult-to-reach locations (such as a gridlocked traffic).

Citation: Neural Lander: Stable Drone Landing Control using Learned Dynamics, Guanya Shi, Xichen Shi, Michael O’Connell, Rose Yu, Kamyar Azizzadenesheli, Animashree Anandkumar, Yisong Yue, Soon-Jo Chung, https://arxiv.org/abs/1811.08027