AI

New Smarter A.I. Image Recognition from Stanford

As technology evolves, it’s mimicking different human senses – we can mimic our organs with sensors and devices and programming helps us mimic our own brain by way of artificial intelligence (A.I.).

This is how image recognition came about. Using the programs designed for image processing, we separate an image or a frozen frame captured or viewed by a camera according to color codes (RGB or CMYK color scheme codes), saturation, hue, locations of different color pixels etc and that is a lot to work with; we can identify objects and features by processing the captured colors, we can identify people and animals, predict the motion and profile of moving objects, use a video to calculate the distance of an object from a camera and relative distances between different objects; the list goes on and on.

But all of that is easier said than done, for fast AND accurate image processing, extremely expensive and powerful computers or processors are used. So this is a massive trade-off between speed, accuracy, price and space.

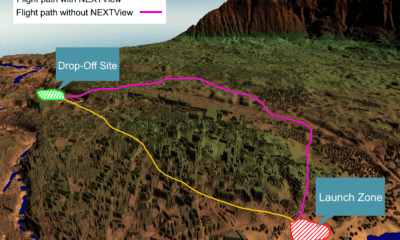

That, however, is about to change with Stanford’s recently developed artificially intelligent camera system optimized for speed and energy efficiency in a compact, convenient processor package. Originally aimed at improving the performance of image acquisition systems in drones, other aircraft and modern vehicles as well as artificially intelligent ships.

Stanford’s work, ‘Hybrid optical-electronic convolutional neural networks with optimized diffractive optics for image classification’, was published August 17th 2018 in Nature Scientific Report.

“That autonomous car you just passed has a relatively huge, relatively slow, energy intensive computer in its trunk,” said Gordon Wetzstein, an assistant professor of electrical engineering at Stanford, who also happens to be the professor who led the research.

So that was about the good news, now how did they do it.

Not many people realize this, but not all computers fit all tasks. There are some computer configurations that support 3d rendering better than gaming and vice versa. Similarly, there are optical computer configurations optimized for optical analyses and image processing; such a computer can separately colors and optical properties reasonably better than a standard digital processor. However, while this computer might be able to tell you that your face has two dark disks very easily, it can not identify the said disks as pupils; and that is where our standard digital processors come in. These are the processors we normally use, capable of mathematical calculations and simple if-else algorithms to make decisions and form classifications. What one of the Harvard graduate students, Julie Chang, did was essentially combining the two processors forming a hybrid computer that works for image processing very effectively.

Let’s talk about just how effective this computer is? Does it stand up to its claims?

Well, the results speak for themselves, as in both real word experiments as well in intensive simulations, the programs were able to conveniently identify objects and vehicles like planes, cars and bikes as well as distinguish between cats, dogs and other animals which are feats unknown to previous AI based solutions.

The research, as stated, was led by Dr. Gordon Wetztein, initiated by graduate student, Julie Chang. Other co-authors include Stanford doctoral candidate Vincent Sitzmann and two researchers from King Abdullah University of Science and Technology, Saudi Arabia.

Reference: Hybrid optical-electronic convolutional neural networks with optimized diffractive optics for image classification, Julie Chang, Vincent Sitzmann, Xiong Dun, Wolfgang Heidrich & Gordon Wetzstein, Scientific Reports volume 8, Article number: 12324 (2018) – https://doi.org/10.1038/s41598-018-30619-y