AI

Fly-by-Eye, A Drone Controlled by Eye Movement

Drones are omnipresent nowadays, however most people find their use tedious and too much work. Indeed controlling a drone isn’t the most intuitive thing in the world so commercial drone manufacturers and robotics experts have been coming up with all kinds of creative solutions and loading drones with varied types of obstacle-avoidance systems to make a drone easier to manoeuvre. There are several permutations and combinations for balancing convenience and capability – body control, face control, and even brain control.

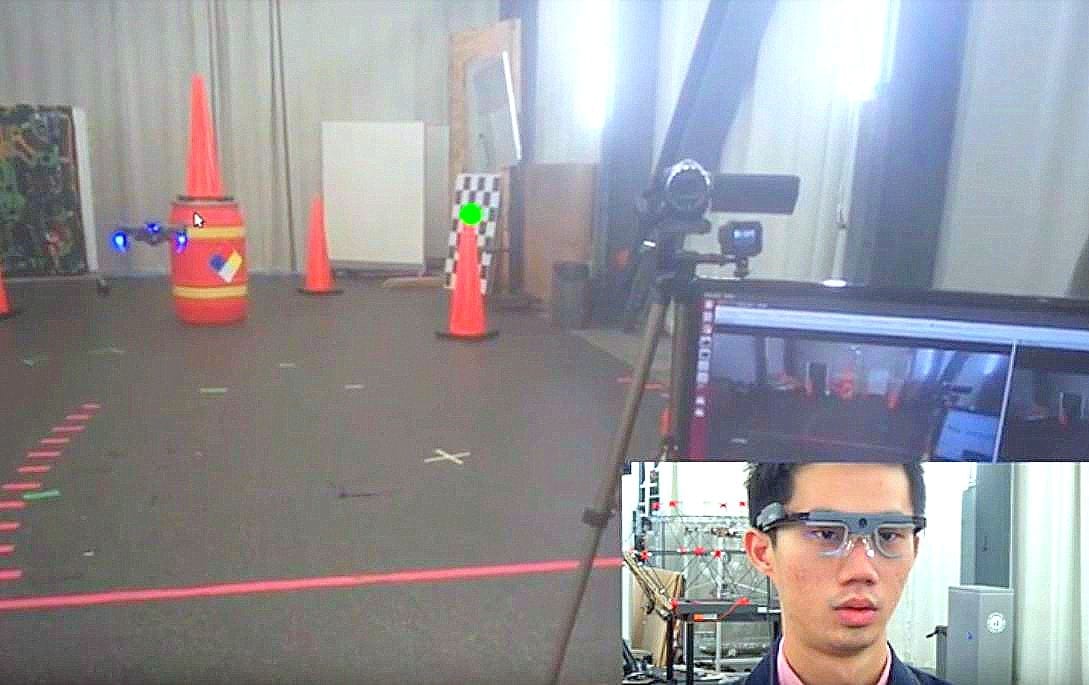

The more capability and convenience are inversely proportional, that is increased convenience means more processing power or infrastructure or brain probes or such. an article by Evan Ackerman on IEEE Spectrum reports on how robotics experts from the University of Pennsylvania, U.S. Army Research Laboratory, and New York University have come up with a unique method based on the users gaze. Equipped with a pair of lightweight gaze-tracking glasses and a small computing unit, a small drone will fly wherever the user looks.

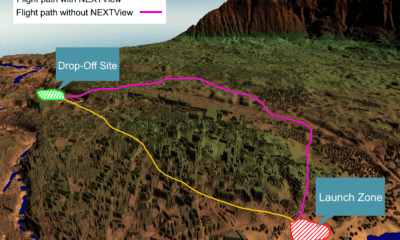

This gaze-control drone is different from previous models in that, the system is self-contained and doesn’t rely on external sensors, which have been required to make a control system user-relative instead of drone-relative. With a regular conventional drone remote, the perspective is drone-relative: when the user commands a ‘go left’ , the drone goes to its left, irrespective of user location, so from the user’s perspective it may go right, or forwards, or backwards, depending on its orientation relative to the user.

In order for this to work properly, the control system has to understand both the location and orientation of the drone, and the location and orientation of the controller, which means the trick is being able to localize the drone relative to the user without having to invest in a motion-capture system, or even rely on GPS.

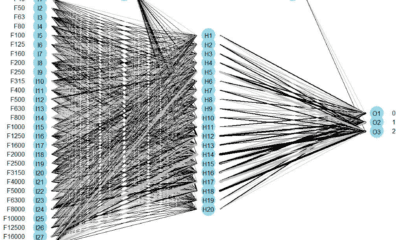

The team used Tobii Pro Glasses 2– a lightweight, non-invasive, wearable eye-tracking system that also includes an IMU and an HD camera. The glasses are hooked up to a portable NVIDIA Jetson TX2 CPU and GPU. With the glasses on, the user just has to look at the drone, and the camera on the glasses detects it using a deep neural network and calculates how far away it is based on its apparent size. Along with head orientation data from the IMU this allows the system to estimate where the drone is relative to the user.

The researchers are hoping their system will enable people with limited drone experience to safely and effectively fly drones. This will be of immense benefit in scenarios of inspection, first response and especially for people whose disabilities affect their mobility.

“Human Gaze-Driven Spatial Tasking of an Autonomous MAV,” by Liangzhe Yuan, Christopher Reardon, Garrett Warnell, and Giuseppe Loianno, from the University of Pennsylvania, U.S. Army Research Laboratory, and New York University, has already been submitted to ICRA 2019 and IEEE Robotics and Automation Letters.