Science & Research

Minimizing Shadows in UAV-Based Orthomosaics

Nowadays, shadows from buildings, terrain and other elevated features represent the lost and impaired data values, hindering the quality of optical images that is acquired under all but the most diffuse illumination conditions.

This is problematic in high-spatial-resolution imagery acquired from drones or unmanned aerial vehicles (UAVs) which operate very close to the ground. Still, the flexibility and low cost of redeployment of the platform also presents a lot of opportunities which are capitalized on in a new workflow designed to eliminate shadows from UAV-based orthomosaics.

Identifying and Eliminating Shadows From Individual Orthophoto Components

In a new paper, a group of reserchers from the University of Calgary first identify and then eliminate shadows from individual orthophoto components, constructing the final orthomosaic using a feature-matching strategy with the commercial software package Photoscan. The utility of their strategy is demonstrated over a treed-wetland study site in northwestern Alberta, Canada.

As the authors note:

“The sharp increase in high-density data, combined with easily accessed structure-from-motion (SfM) algorithms for extracting 3D surface models, has greatly enhanced our capacity to generate high- resolution orthomosaics (Colomina and Molina 2014). While shadows are a prominent and often-troublesome feature of UAV-derived orthomosaics (e.g. Lovitt et al. 2017), the flexibility of the platform provides unique opportunities for integrating the multitemporal data required by replacement-type shadow-compensation algorithms. In this paper, we propose a new UAV-based data collection and processing workflow designed to produce seamless, shadow-free orthomosaics. We demonstrate our techniques over a vegetated study area in northwestern Alberta, Canada.”

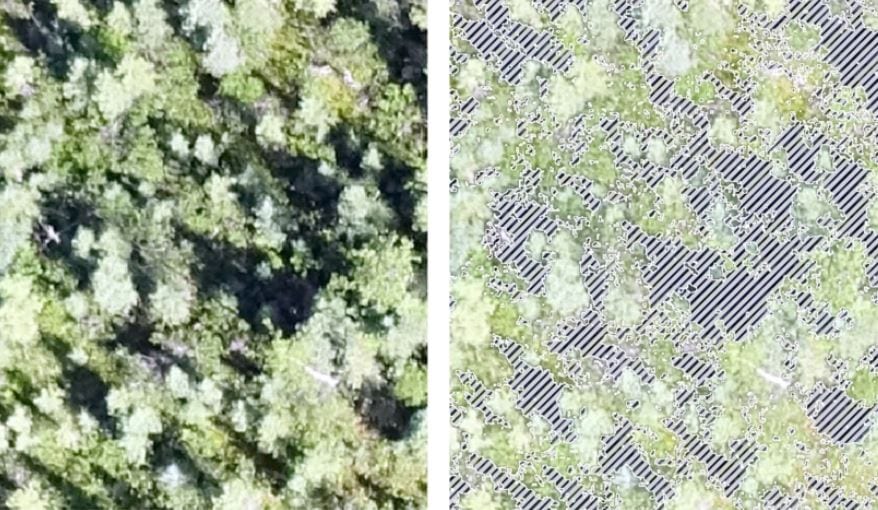

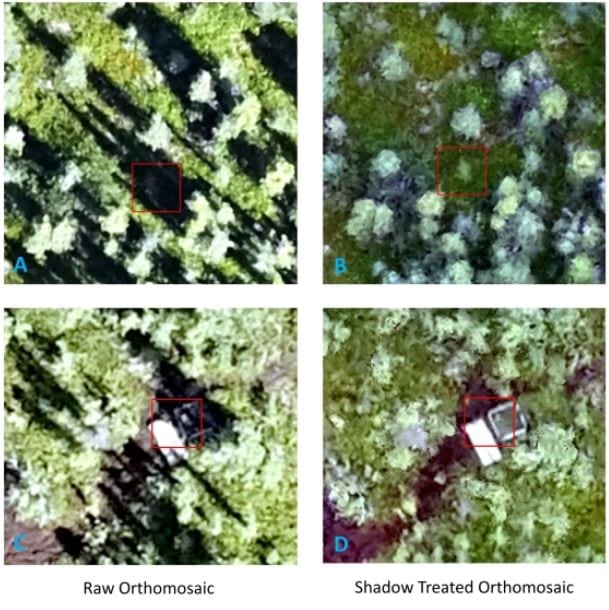

The study overlooks a complex scene that contains wide variety of shadows which are eliminated by the authors’ workflow. However, while the shadow-reduced orthomosaics are generally less useful for feature-identification tasks that rely on the shadow element of image interpretation, they also create a superior foundation for most of the other image-processing routines such as classification and change detection.

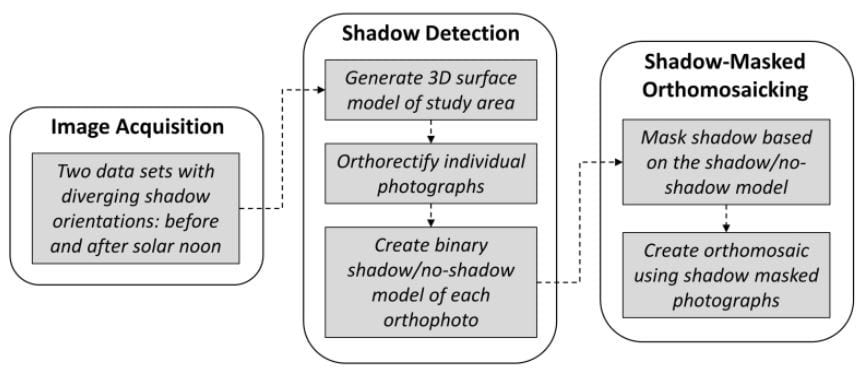

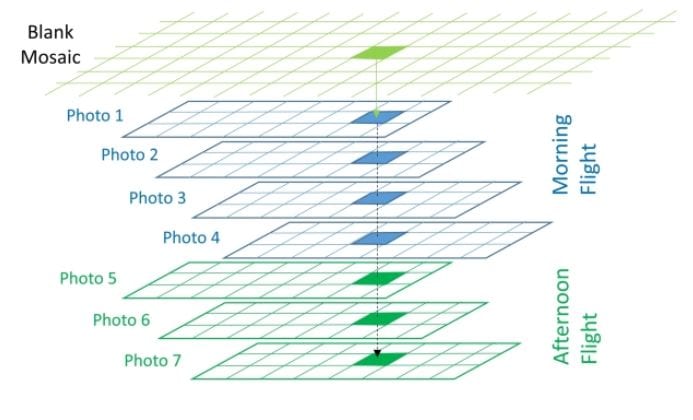

A workflow for creating shadow-reduced orthomosaics from two-pass UAV photography.

A diagram displaying a simple conceptual model for identifying overlapping pixels from individual

orthophotos.

Collecting Two Sets of UAV Photographs to Perform Image Acquisition and Use Shadows

By collecting two sets of UAV photographs, the authors perform image acquisition and use shadows from the pre-noon and post-noon flights, identifying them and masking them using a two-step procedure.

They also make use of ortho-mosaicing, defining it as a process that is used to generate the final orthomosaic of the study area. They use Agisoft Photoscan to perform the task, taking the mesh and associated RGB values from the photos to create digital orthophotography.

Example of seamless performance of shadow treated orthomosaic under shadows

“Compensation for shadows using multitemporal imagery is usually done with historical data, with images acquired from a different date. Consequently, the radiometry among image dates is expected to vary due to phenological changes, varied sun-surface-sensor geometry, and environmental conditions, making it difficult to produce a seamless mosaic. In contrast, UAV platforms are able to collect data quickly and repeatedly, delivering data with similar illumination conditions and same-day target status,” the authors noted.

The border of individual photos was another area of potential concern. In general, the authors found both the raw orthomosaic and the shadow-treated orthomosaic to display seamless borders at the edge of individual photos. In areas where the canopy was very dense, a complex mixture of shadow made it difficult to observe the ground even when the data was collected at two different times of the day.

In the end, the straightforward workflow capitalizes on the strengths and flexibility of the UAV platform, capable of detecting and reliably compensating for shadows across a vegetated study area.

Citation: A Workflow to Minimize Shadows in UAV-based Orthomosaics, Dr. Mir Mustafizur Rahman, Prof. Gregory J. McDermid, Mr. Taylor Mckeeman, Ms. Julie Lovitt, Journal of Unmanned Vehicle Systems, 0, 0, https://doi.org/10.1139/juvs-2018-0012 – http://www.nrcresearchpress.com/doi/10.1139/juvs-2018-0012#.XD5fPilS-Ul